AI: Growth, Control, and the Future of Creative Value

- Henry Marsden

- Apr 10

- 5 min read

For all the noise around AI over the past year- new models, new capabilities, new fear- the most important developments haven’t been technical, but structural.

In recent months, the UK government has tried to start defining an approach to one of the most consequential economic questions of the next decade: How do you build a world-leading AI sector without undermining the value of the content it depends on?

The publication of the Copyright and AI Impact Assessment doesn’t answer that question outright, but it does something arguably more important, by making clearer that the UK recognises the tension at the heart of it.

AI and the creative industries are not separate forces. They are, in the government’s own words, intertwined.

A Familiar Story

There’s a natural temptation to treat AI as something entirely new, distinct from previous technology waves. In practice, however, in many ways it still fits a much older pattern.

Each major technological shift in the creative industries has sparked the same underlying debate. From sampling to streaming, each wave has raised concerns about replacement, disruption, and loss of control. And each time, the outcome has been more nuanced.

Time and again the technology itself hasn’t eliminated creativity, but it has more often than not (at a minimum) changed the economics around it. AI is really doing exactly the same thing now- but at a scale and speed that certainly does feel new.

The government’s own projections underline this, with suggestions that AI adoption could add tens of billions to the UK economy within a few years (driven primarily by productivity gains). At the same time, the creative industries already represent one of the UK’s most valuable sectors, certainly economically but undoubtedly culturally.

This isn’t a marginal policy discussion, but about how two of the country’s most important sources of value interact and intersect. Ultimately it's about who benefits from that interaction.

Data and Ownership

AI systems need data. Not just any data, but vast amounts of high-quality, human-created content to train upon. Of course, that content doesn’t appear out of nowhere- it is written, composed, recorded, designed and owned. And yet much of it has already been used to train AI systems without clear permission or compensation- particularly in jurisdictions with “greyer” areas of copyright law.

The government acknowledges this reality quite openly. It also acknowledges something equally important: that without access to this data, many of today’s AI systems would not exist in their current form, thus creating a central tension.

AI creates enormous downstream value. But it is built on already valuable upstream input. The question is whether those two things can and will remain connected economically.

There are (of course) already discussions encouraging the training of models on the output of another model. Holding this newsletter’s exploration in mind, this "recycled training" is only likely to muddy the waters on licensing (as some may hope), as well as almost certainly erode the quality of any subsequent model.

Value Drift

What is clear from the UK’s position is that it is challenging to both encourage creation of value, without sacrificing or eroding the inherent value it is built upon.

Push too far in one direction, and you risk slowing innovation (or so the argument goes). But make access too restrictive and you risk pushing development into other jurisdictions where rules are more permissive.

Over time, if creative work can be used freely at scale without meaningful compensation, the incentive structure that copyright is entirely built to protect will weaken. Eventually this will have direct implications on the quality and supply of creative output- a sad day indeed.

This is not a theoretical concern. The government’s report itself points to the already tangible reality that AI is beginning to capture a growing share of value traditionally earned by creators and rightsholders. The output itself is competing against its own inputs!

This single line recognises that this isn’t just about technology, but materially about economic distribution.

A Market Trying to Form Itself

What makes the current moment particularly interesting is that the market solution is already trying to emerge, but hasn’t quite settled.

Licensing deals are beginning to appear, with some larger rightsholders striking agreements with AI companies. New intermediaries are positioning themselves as bridges between content and models, with compatriot technical tools for tracking, attribution and control already also emerging (AI tools to detect use of AI, anyone?).

Much of it is not yet fully formed. The licensing market is early, fragmented, and largely concentrated among the biggest players- as naturally happens with new technology disruption. A consequence of this is that transparency remains limited, and for many creators- particularly smaller ones- it is still unclear whether they are participating in this new value chain at all.

The UK government’s instinct, at least for now, is to let this market develop rather than impose a rigid framework too early- an approach that is logical, but does carry risk.

Markets don’t tend to evolve in ways that distribute value evenly, especially when information is asymmetric and bargaining power is particularly concentrated.

AI as a Creative Multiplier

Amid the policy debate, it’s easy to lose sight of something more fundamental. AI is not only competing with creativity, but also amplifying it.

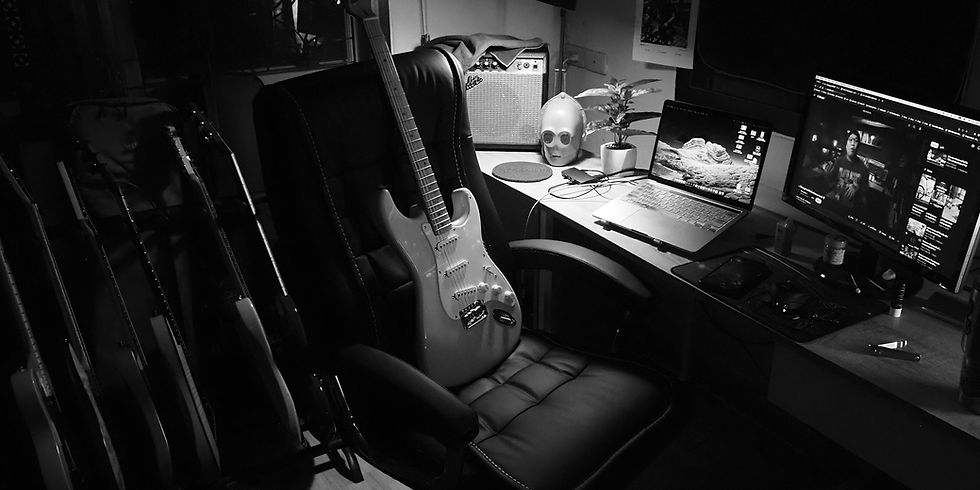

The most interesting use cases emerging today are not about replacement, but augmentation. Writers using AI to explore ideas more quickly, Producers using it to experiment with new sounds. Mix and Mastering engineers incorporating it into entirely new workflows.

This should not be surprising. Creators have always adopted new tools faster and more imaginatively than expected, and arguably at times quicker than the technology community itself. That is, in many ways, one of the key ingredients that defines them- an appetite for experimentation. As mentioned previously, Synths didn’t remove musicianship and DAWs didn’t remove production skill.

AI is unlikely to remove creativity- but it will change what creativity looks like, and how efficiently it can be crafted and distributed.

The Principle That Matters

This brings us back to the core issue. If AI is a force multiplier for creativity, and if it derives value from creative work, then the economic link between the two needs to be meaningfully preserved.

Copyright, at its core, is about ensuring that creative output remains economically viable.

The UK government’s current position reflects an attempt to balance that principle with a desire to remain globally competitive in AI. Whether that balance can actually be achieved remains an open question.

What feels clear is that this is not a temporary moment. Much as with the internet before it, AI is becoming infrastructure. When infrastructure is built, the most important decisions are not about the technology itself, but about how value flows through it.

This leaves the UK (and other governments globally) with a choice. To allow value to drift away from the creators who underpin it, or to uphold systems (technical, legal and commercial) that ensure they remain part of it.

The decisions made now will shape not just how AI develops, but who ultimately benefits from it.

Comments