Music Publishing Isn’t Really a Music Business Anymore

- Henry Marsden

- Feb 4

- 5 min read

Updated: Feb 26

Last week, at Music Ally Connect in London, I heard a line that has stayed with me. It wasn’t framed as a provocation, or even as a big idea, but was delivered matter-of-factly, as an observation drawn of experience, from Caroline Champarnaud of SACEM-

“We are a defacto data company. We don’t work in the music industry- we work in the data industry.”

It speaks deeply to the operational reality of a modern collecting society- though it resonates far beyond that context, into the very fabric of music publishing and copyright administration in the modern age. The more I’ve thought about it since, the more it feels like an accurate description of the publishing ecosystem- and how those that are building with this in mind will mature beyond those that do not.

This is not a claim about creativity, repertoire, or cultural relevance. Music remains the product- the ‘language’ or fabric that connects. Songs remain the reason the industry exists. But the day-to-day work of publishers, CMOs, administrators and funds looks more akin to a large-scale data operation than a traditional music business.

That shift didn’t happen overnight, and it didn’t happen by design. It’s emerged as a consequence of how the underlying economics and infrastructure of music have evolved.

Scale has quietly rewritten the job description

One of the clearest and most obvious signals of this shift is the volume of data now being processed.

During the same conference, Laurent Hubert noted that publishing royalty lines are growing at roughly 30–40% year-on-year, and that AMRA alone processed more than 100 billion lines in 2025. Numbers like that are difficult to grasp, but their implications are straightforward- at this sort of scale, publishing administration presents a very real engineering problem.

Royalty processing has moved well beyond the world of periodic statements, pivot tables and summary reports. Modern publishing operations ingest continuous, line-level reporting from hundreds of digital partners, across hundreds of territories, covering an expanding range of usage types. Each line must be normalised, matched, validated, priced, allocated, and ultimately paid.

That work is not driven by creative decision-making or deal-making, but rather by data pipelines, reconciliation logic, matching models, and increasingly complex analytical systems. The music still matters, but the operational workload is utterly dominated by the mechanics of data.

Digital consumption reshaped the infrastructure layer

The move to digital consumption did more than increase volume- it has also altered the structure and cadence of reporting.

DSPs generate data continuously, globally, and at extreme levels of granularity. They are tech companies first- natives to large volumes of data flow that are more usually found at home in the financial industries. Their reporting systems are designed for internal accounting and platform optimisation, but not necessarily for the complexities of music copyright. As a result, publishers and CMOs are ingesting datasets shaped by technology companies, rather than by age old media/entertainment-led legacy institutions.

The industry often talks about transparency, but transparency at this scale also requires interpretation. Raw reporting may be plentiful, but understanding is harder to come by.

Financialisation raised the bar on data quality

The financialisation of music rights has also accelerated this transition. As publishing catalogs have become assets, expectations around certainty and risk have increased.

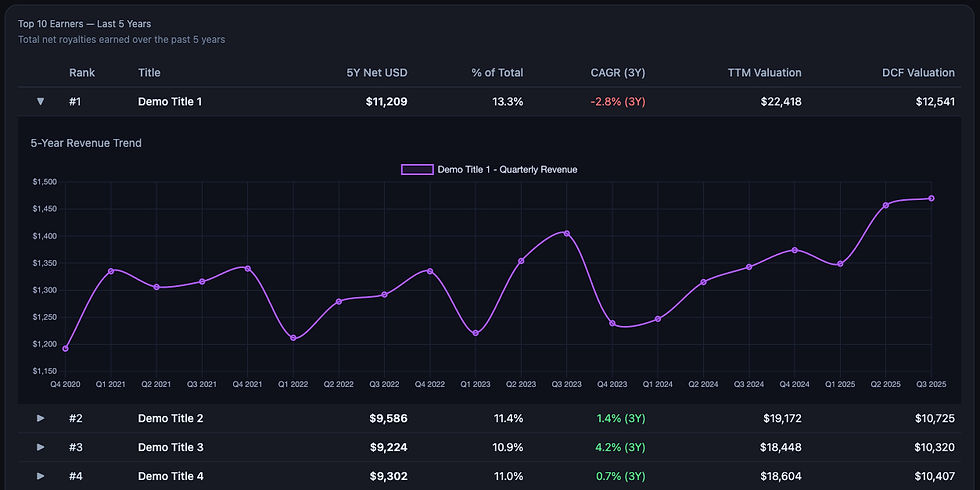

For funds, lenders, and institutional investors- revenue underpins valuation models, debt structures, and return forecasts. That makes the provenance of data and the accuracy of the analytics built on top of it mission critical- let alone with fiduciary responsibilities to boot. In an environment where rights are concurrently fragmented and ‘re-aggregated’- sold and re-sold- small inconsistencies propagate very quickly and end up blocking material revenue.

A side note to consider- is the cost of these propagated issues, or of moving catalogs baked into deals? Catalogs with fundamentally well organised data (and subsequent registrations) have a significantly lower ‘cost of doing business’ when they’re transferred between owners.

A missing share, a duplicated work, or a mis-mapped usage line directly affects cashflows and confidence in forward-looking models. As catalogs scale, these issues stop being isolated problems and begin to shape portfolio-level outcomes.

This is why data lineage, auditability, and reproducibility have become central concerns. They are the cornerstone that reduce risk, and exposure. The question increasingly asked is not simply what a work earned, but how robust that number is, and what assumptions are underpinning it.

Data accumulation to confident decision-making

As we’ve explored previously, for publishers and rights holders the challenge is no longer collecting data but knowing what to do with it, and when to trust it.

Operational decisions increasingly rely on analytical outputs: how aggressively to pursue claims, how to prioritise works for review, how to underwrite a catalog, how to forecast income under changing platform dynamics or licensing arrangements. Each of those decisions depends on an underlying model of reality, and when that model is poorly understood the confidence in the outcome is fragile. It is a prime example of ‘garbage in, garbage out’- and if the mechanics of what happens inside the box are not understood then the output cannot be relied upon.

This is where the distinction between data and insight becomes material. Insight is not simply a metric or a visualisation. It reflects an understanding of why a number looks the way it does, how sensitive it is to underlying assumptions, and how it is likely to behave as conditions change.

As a fund put it to me recently, “We don’t want more data- we want knowledge and insight”. In capital allocation, confidence matters as much as precision. Numbers that cannot be explained, stress-tested, or contextualised are difficult to act on, regardless of how polished they appear.

As reporting volumes grow and systems become more complex, the problem only intensifies. More data increases the surface area for inconsistency- remembering too that mistakes or errors will be similarly scaled in parallel. More automation increases the distance between raw inputs and final outputs- without a clear line of sight into how conclusions are reached, decision-making becomes riskier by default.

The practical question for organisations, then, is not how much data they can see, but how well they understand the logic that turns that data into conclusions they can bet the house on.

An industry still built on songs, run on systems

For rights holders, engagement with data is unavoidable- it has become a core operating concern rather than a supporting function.

Data strategy now sits alongside repertoire strategy as a determinant of long-term outcomes. Tool selection and vendor dependencies shape internal understanding as much as they shape efficiency. This also means internal literacy around data is increasingly important, even where infrastructure is outsourced.

As data volumes continue to grow, the cost of poor assumptions increases. Small errors scale quickly, and their effects are often felt far from their point of origin.

The music itself remains the foundation of the publishing industry. Songs are the asset, and creativity remains the source of value. But the systems that govern how that value is tracked, allocated, and monetised operate at a scale and complexity that aligns far more closely with the data industry.

Understanding that shift helps explain many of the pressures the sector is experiencing- from infrastructure strain and analytical opacity to growing scrutiny from financial stakeholders.

The organisations that perform best in this environment tend to be those that treat data as a first-order concern, and are investing accordingly.

Comments